- Blog

- About

- Contact

- How to make vlc default mac

- Where to store python download file on mac

- Bat man arkham knight update

- How do i reformat mac internal hard drive

- Can i uninstall daemon pro tools on windows 10

- Intel hd graphics 520 driver update acer

- Dvd free mp3 converter

- Cant find my sticky notes on mac

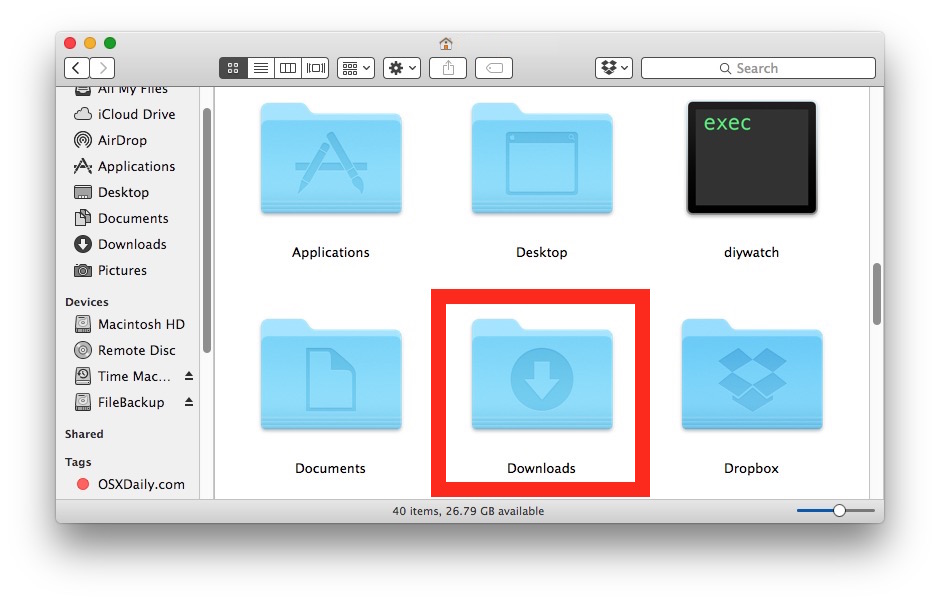

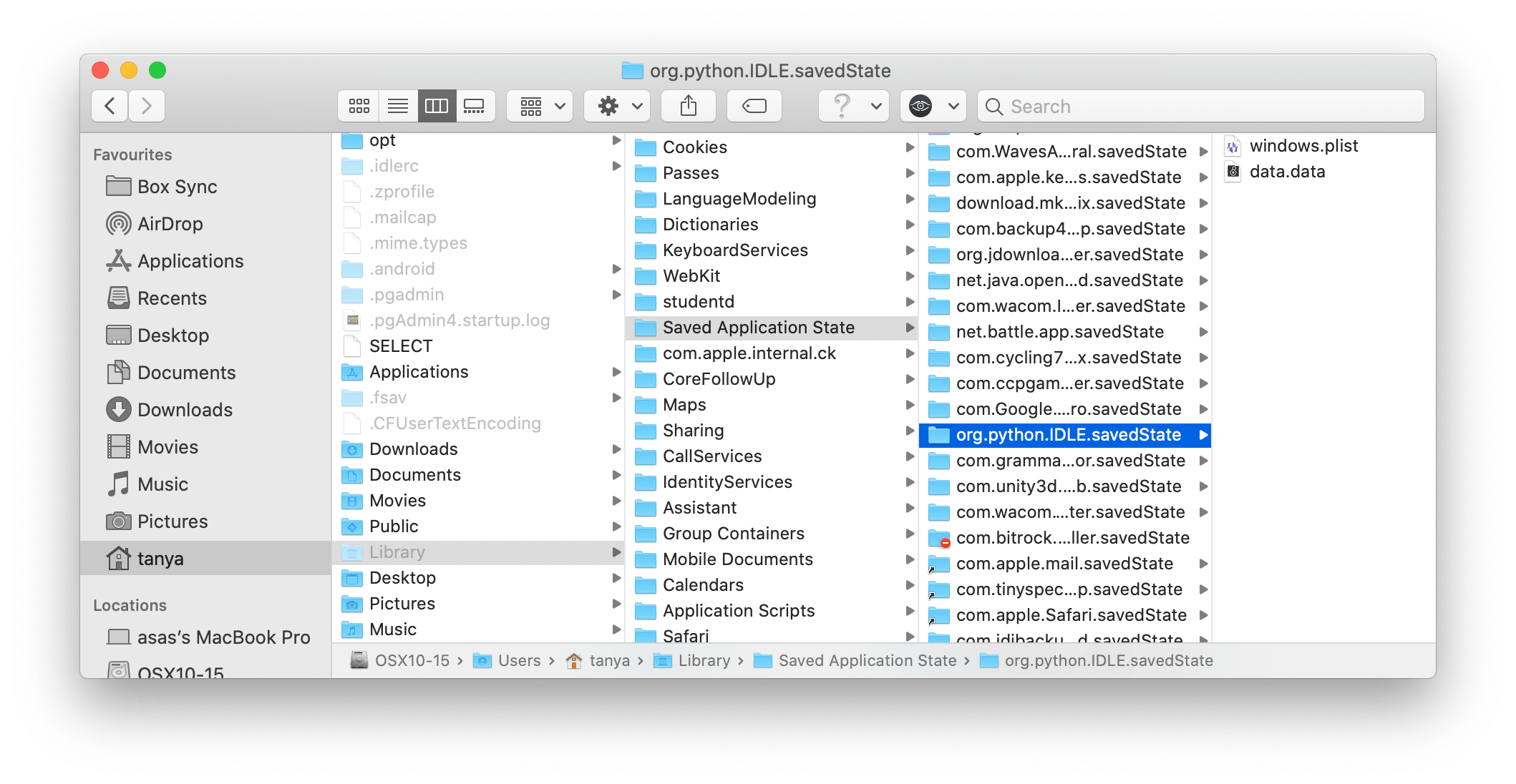

- #Where to store python download file on mac how to#

- #Where to store python download file on mac code#

- #Where to store python download file on mac download#

Memory allocated - 256 MiB should be fine * Next, let’s fill in the settings for this Cloud Function In this case, we’ll name our Pub/Sub topic get-file-from-url.

#Where to store python download file on mac download#

Remember the name of the Pub/Sub topic you’ve created, as Cloud Scheduler will need this topic in order to schedule the download of the file. You can learn more about triggering Cloud Functions via Pub/Sub here.Ĭreate a Pub/Sub Topic, as illustrated below. You’ll need to create a Pub/Sub topic as you set up the Cloud Function. This Cloud Function will be triggered by Pub/Sub. Cloud Function 1 - Download data from a url, then store it in Google Cloud StorageĬloud Functions are trigged from events - HTTP, Pub/Sub, objects landing in Cloud Storage, etc. That said, there’s nothing to prevent you from combining the two if that’s what you’re into. Also, each function gives you a module that can be reused in other settings with a little modification. I’m a fan of keeping Cloud Functions as discrete and single-purpose as possible. Why not just combine the two Cloud Functions and do this all in one go? You can certainly do it this way if you want. Another Cloud Function will load this data from Google Cloud Storage into BigQuery. One Cloud Function will download the data from a url and store it in Google Cloud Storage. You’ll need to create two Cloud Functions. You can learn more about GCP Cloud Functions here.

Cloud Functions are triggered by events, such as Pub/Sub or objects landing in Cloud Storage. If you’re familiar with AWS Lambda, Cloud Functions are the equivalent in GCP. They are perfect for a project like this where you need a lightweight and cheap workflow.

#Where to store python download file on mac code#

date,county,state,fips,cases,deathsĬreate the BigQuery table, which should have a schema that looks like this.Ĭloud Functions allow you to run discrete parts of code in an event-driven and serverless manner. These BigQuery fields match the fields in the NY Times COVID csv file’s header. When you create your BigQuery table, you’ll need to create a schema with the following fields. Just remember that you first create a dataset, then a create a table. Just like the Cloud Storage bucket, creating a BigQuery dataset and table is very simple. Setting up a Cloud Storage bucket is pretty straightforward, so straightforward that I’ll just give you a link to the official GCP documentation that gives an example. Let’s get started! 1 - Create A Cloud Storage Bucket Here’s a summary of what we’re going to build.Īll of the code examples are located in this GitHub repo.Įven though this example is for the NY Times COVID dataset, it can be used for any situation where you download a file and import into BigQuery.

You’ll need a Google Cloud account and a project, as well as API access to Cloud Storage, Cloud Functions, Cloud Scheduler, Pub/Sub, and BigQuery. We will need to create a few things to get started. So, you can take the easy way and view the data directly in BigQuery’s public dataset, or continue following along with this example. There are a few moving parts, but it’s not too complicated.Īlso, the NY Times COVID dataset is now available in BigQuery’s public datasets. Note - This tutorial generalizes to any similar workflow where you need to download a file and import into BigQuery. Schedule the download of a csv file from the internet

#Where to store python download file on mac how to#

In this tutorial, I’m going to show you how to set up a serverless data pipeline in GCP that will do the following.

Thankfully, Google Cloud (GCP) offers some awesome serverless tools where you can run a workflow like this for next to no cost. However, this also requires a machine that is running the code, and he didn’t feel like running this from a laptop, or spinning up a cloud server. In this case, you’d simply download the data each day using Curl or WGET, then import into BigQuery using gcloud commands. Of course, a simple option is to use bash and cron. Here’s a sample of the New York Times’s COVID-19 dataset. Oh, and I’m also not that good at programming in Python.”

“How do I download this data set every day, and load it into BigQuery so my friends can do analysis on it? Preferably in a serverless and cost effective manner. Recently, a friend asked for a simple way to set up a data pipeline for analyzing the daily results from the New York Times’s COVID-19 dataset.